Node file system helps us to access the files and also interact with files in our system. Node.js’s file system module is also known as "fs" module. We don’t need to install it explicitly as it comes with Node.js core. We can use the fs module as

var fs = require('fs');

File system module can be used for file operations like creating, reading, deleting, etc. The operations that we need to perform can have synchronous and asynchronous forms depending on our requirements.

Common use cases of File System module:

Write Files

Read Files

Delete Files

Append Files

Close Files

Read File

To read the file from our system, fs.ReadFile() function is used. fs.readFile() is a built-in function used to read files located in our system with the syntax given below

Syntax

fs.readFile( filename, encoding, callback_function )

In the parameters, we can provide a filename as the name of the file if it is located in the same directory and a full path if the file is located on locations other than the same directory. Encoding has the encoding value of the file, by default the value of encoding is utf8.

The callback function is the function that is called once the file is read. This function takes two parameters first is err and the other is data. Err indicates if any error has occurred during reading the file and data contains the content of the file.

To create a demo for this function let's create a text file. Create a text file named “FirstFile.txt” with “My First File.” as content.

Now let's create a file named “FileRead.js”, to read the contents of the text file that we created.

Example

var http = require('http');

var fs = require('fs');

http.createServer(function (req, res) {

fs.readFile('FirstFile.txt', "utf-8" , function(err, data) {

res.writeHead(200, {'Content-Type': 'text/html'});

res.write(data);

return res.end();

});

}).listen(8080);

console.log("File Read!");

Note

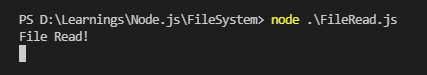

If the output is undefined it means the file is null. It starts reading the file and simultaneously executes the code. The function will be called once the file has been read meanwhile the ‘File Read!” statement is printed then the contents of the file are printed.

Initiate the file

node .\FileRead.js

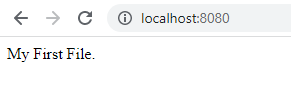

Opening the localhost, we will be able to read the contents of the file as shown below,

Create File

Creating files using node.js can be done through many approaches. Some of the methods that can be used are appendFile(), open(), or writeFile(). Let’s look into the details of each function and when to use it.

fs.appendFile()

The fs.appendFile() method of Node.js is used to synchronously append the data to the file, if the file doesn’t exist then a new file will be created with the content specified.

Syntax

fs.appendFile(filepath, data, options, callback);

In the parameters, filepath will be the path of the file provided as a string, parameter data is the data that we need to append to the file, options specifies the flag/encoding/mode and the callback is a function that is called when the data is appended to the file.

Example

var fs = require('fs');

fs.appendFile('AppendFile.txt', 'Append File demo!', function (err) {

if (err) throw err;

console.log('Content appended/file created.');

});

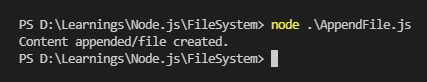

Initiate the file:

A new file named 'AppendFile.txt' will be created if it doesn’t exist and if the file exists the text provided in the code will be appended.

fs.open()

The fs.open() method is used to create, read, or write a file. Writing to a file using open() method depends on the second parameter flag, passed to the method. If we pass the parameter as “w” then the specified file is opened for writing. If the file doesn’t exist then a new blank file is created with the name specified.

Syntax

fs.open(path, flags, mode, callback)

The parameters path holds the name of the file if the file is located in the same directory and it contains the entire path if the file is located at other locations.

Mode sets the mode of the file for example r-read, w-write, r+ -readwrite, etc. The default value of the mode is readwrite.

The parameter flag indicates the behavior of the file to be opened. The values that we can provide for the value of flag parameter are r, r+, rs, rs+, w, wx, w+, wx+, a, ax, a+ or ax+.

The callback function is the function that is called after reading the file.

Let’s try to create a blank file using the open method of the fs module of Node.js.

var fs = require('fs');

fs.open('OpenFile.txt', 'w', function (err, file) {

if (err) throw err;

console.log('New file created!');

});

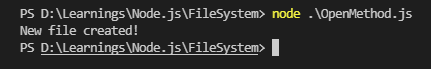

Initiate the file,

The fs.readFile() method is used only for reading the file and fs.writeFile() method is only for writing to the file, whereas fs.open() method does several operations on a file such as creating, reading, or writing.

fs.writeFile()

The method WriteFile() replaces the specified file and content if the file already exists. If the file doesn’t exist, then a new file will be created with the content as provided. This method is used to asynchronously write the specified data to a file.

Syntax

fs.writeFile(path, data, options, callback)

The path parameter here can be a string, Buffer, URL, or file description integer that denotes the path of the file where it has to be written. Using a file descriptor will make it behave similarly to fs.write() method.

Example

var fs = require('fs');

fs.writeFile('WriteFile.txt', 'New file created with content!', function (err) {

if (err) throw err;

console.log('File created and saved!');

});

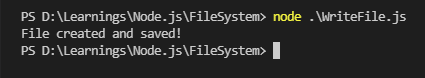

Initiate the file,

Update Files

To update the files there are some methods from the file system module that can be used.

Methods that can be used for updating the files are fs.appendFile() and fs.writeFile().

As we already have the description of the function fs.appendFile() in the create file section, let’s have a look at an example.

var fs = require('fs');

fs.appendFile('AppendFile.txt', ' Appended text...', function (err) {

if (err) throw err;

console.log('Updated!');

});

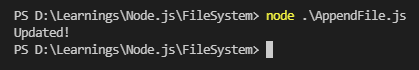

Initiating this file will append the data to the existing file.

For details of the fs.writeFile() function please refer to create file section. Looking at an example of fs.writeFile() function:

var fs = require('fs');

fs.writeFile('WriteFile.txt', 'File with name WriteFile updated.', function (err) {

if (err) throw err;

console.log('File updated and saved!');

});

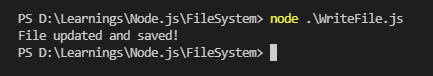

Initiating the file replaces the specified file and the contents will be updated and the file will be saved.

Delete Files

To delete files using Node.js, we can use the method fs.unlink(). This function is used to remove a file, it doesn’t work on deleting directories. To delete directories we can use the method fs.rmdir().

Syntax

fs.unlink(path, callback)

Here in this method we need to pass two parameters first is the path of the file that we need to remove, it can be string, Buffer, or URL. And another parameter is the callback. The callback is a function that would be called when the method is executed.

Example

var fs = require('fs');

fs.unlink('SampleFile.txt', function (err) {

if (err) throw err;

console.log('SampleFile deleted...');

});

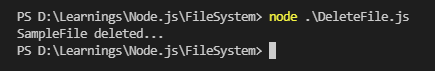

Initiating the file, the file that we mentioned in the code will be deleted.

Rename Files

We can also rename the file names using Node.js and to rename the file names we can use the function fs.rename(). The function will be used to rename the file at oldPath to the pathname provided as newPath.

The parameters used to rename the file are oldpath, newpath, and the callback function. Here old path will be the path of the file that already exists, a new path will be the new name of the file that we want to replace the old file name with. And the callback function will be the function that will be called when the method is executed.

In the case that newPath already exists, it will be overwritten. If there is a directory at newPath, an error will be raised instead. No arguments other than a possible exception are given to the completion callback.

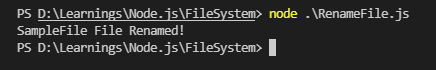

Example

var fs = require('fs');

fs.rename('SampleFile.txt', 'SampleFileRenamed.txt', function (err) {

if (err) throw err;

console.log('SampleFile File Renamed!');

});

Initiating the file, the old file will be replaced with the new file name provided.

After going through this article and performing the code snippets provided you will be able to work with all the basic file operations such as create, update, delete and rename. Please feel free to post the feedback if any and let me know if you want me to work on any content that would help to learn Node.js further.